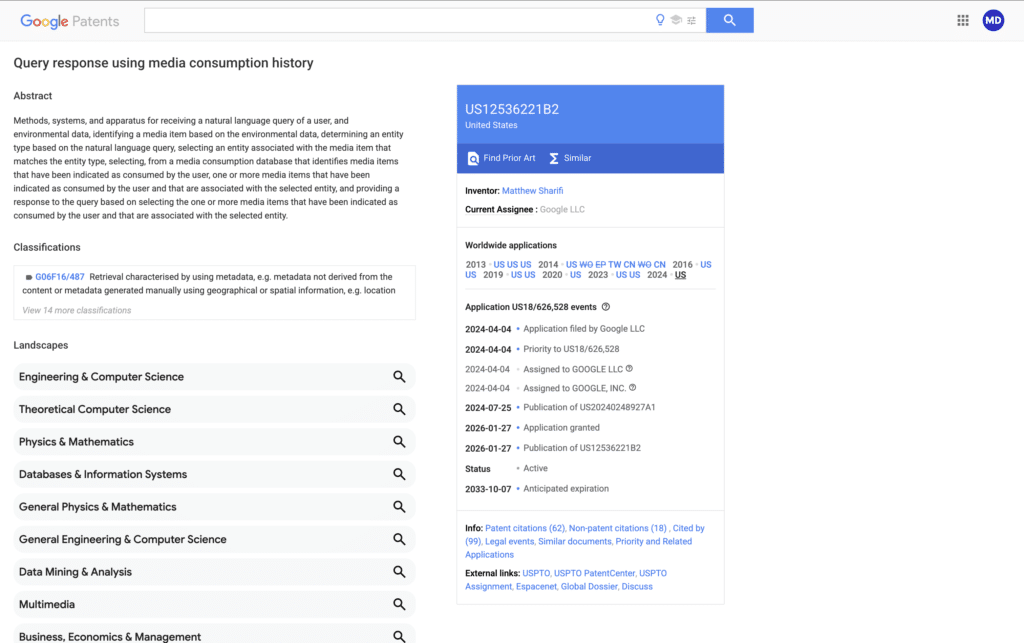

Google just patented a system for training and informing AI using your real-time media consumption history (US12536221B2).

The AI isn’t just waiting for you to type a query anymore; it’s contextually “living” alongside you. By analyzing the music, podcasts, videos, and articles you consume across your devices, Gemini builds a real-time map of your current situation.

Meaning: If you’re stuck in traffic while a navigation app is running and a calendar invite is approaching, Gemini doesn’t just see a delay. It understands the urgency. It may already have a “running late” message drafted, using the tone you usually use with that specific contact – before you even reach for your phone.

This is a clear confirmation of where Gemini is headed: the ultimate personal assistant.

Most AI today is reactive (you ask, it answers). This patent describes a proactive AI that uses your media “footprint” to anticipate what you’ll need next.

When you start a conversation with Gemini, it won’t start from zero. It will already know what you were just listening to, what news you just read, and what context you are currently in.

This bridges the gap between your YouTube history, your Spotify habits, and your Google Calendar, threading them into a single, coherent “memory” for the AI to use.

My Take:

Google just secured the exclusive rights to be the AI that knows what you’re watching.

While their privacy policies already allowed them to ‘improve services’ with your data, this patent gives them the competitive ‘moat’ to finally turn Gemini into an assistant that has its eyes and ears on your media history without fear of being copied by rivals.